Overview

When we’re conducting the research phase of a digital health project, we’re not only looking for insights into a specific problem—e.g. why treatment adherence is low—we’re also looking for insights that help us overcome the potential inadequacies of how we fundamentally think about and see that problem. This latter kind of insight can completely change what it is we think we’re trying to solve and even open us up to novel, more effective uses of technology in the solution.

Take practice variation in wound care, for example. For decades, the problem was looked at through the lens of education: ‘if only we could help the generalist nurses—who confront the majority of wounds—to make better, more consistent assessments, like the specialist wound care nurses do’. Seeing the problem in this way, you would be forgiven for thinking the optimal solution could be a new, more engaging educational campaign delivered directly to the nurses phones via an app. If, however, the problem was not looked at through the lens of education, but through the lens of logistics, then the optimal solution would look quite different. By using algorithms to automate procedural decision making for the 80% of invariant wounds assessed and treated by the generalist nurses, you free-up the limited number of specialist nurses to focus solely on the variance and hard-to-heal outliers found in the remaining 20% of wounds.

This kind of insight—the kind that has the power to change what it is we think we’re trying to solve—requires a stepping back from the problem and a willingness to look at things from as many different points of view as possible. The traditional approach—one of market research, ad boards, focus groups and surveys—is, by itself, rarely able to deliver on this. As David Ogilvy once said, “people don’t think how they feel, they don’t say what they think, and they don’t do what they say”.

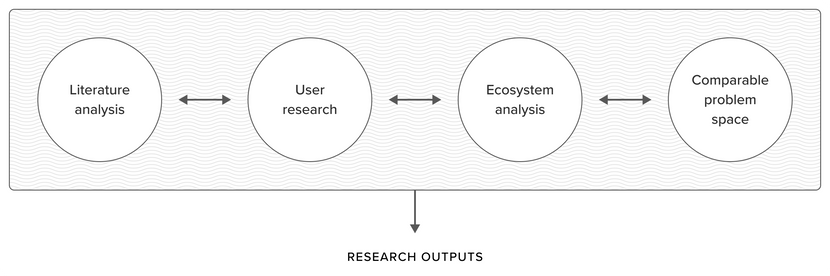

So how do we generate the kinds of insight that can open us up to new ways of looking at a problem? Our answer is something we call Insight Mining—a dynamic system of interdisciplinary practices that adapt to the context of our research. Insight Mining is not only a way of trying to better understand a problem: it’s a means by which we can play with how we fundamentally frame and formulate problems in healthcare in search of novel, more effective interventions.

Literature analysis

This is where we comb through the medical literature and emerging innovation in a therapeutic area. We’re especially interested in understanding:

- What the literature has to say about the needs and challenges of users—which we might not necessarily come across in one-to-one qualitative research.

- What models the current theories and interventions are built upon and how these interact with prevailing ideas both within and across disciplines, including; information processing, anthropology, neuroscience, and psychology.

User research

The importance of effective user research in informing early product decisions cannot be overstated so it’s important that we make the most of our time with prospective users at this stage of a product’s development. Some key principles include:

- Resist ad boards and focus groups. This has already been written about extensively but a nice summary can be found in an article written by UX Designer, Joe Natolis.

- Favour one-to-one conversations. We want to limit outside influence and group think, on both sides of the conversation.

- Conduct user research in the natural environment of the user. Not only can this simplify logistics, saving money and time, but it also allows people to speak freely from the comfort of their own home or place of work. When you take people out of their day-to-day environment it can alter their perspective—useful for helping internal stakeholders break out of ‘business as usual’; less useful when you’re trying to understand how a patient manages their condition at home, or how a clinician makes treatment decisions at the point of care. While having these conversations face-to-face can be preferable, conducting user research over a secure video conferencing tool creates a degree of separation that can make it easier for people to open up about sensitive topics.

- Have a guiding script, but allow the conversation to develop naturally. Good user research is a participation in the other person’s perspective, not an interview. It’s still important to address the legal and clinical necessities when interacting with patients and users, but a creative legal approach is necessary, to ensure that disclaimers put participants at ease, rather than making them feel like they’re being read their rights.

Ecosystem analysis

This is where we try to map out the systemic ‘whole’ that our problem is fitted within. We’re not only interested in capturing the more commonly analysed aspects—such as legacy processes, systems and value flows—we’re also interested in understanding how human attention, decision making and physical movement play a role. Some of the other considerations we might make include:

- How different parts of the system relate to each other and where the trade-offs lie.

- Which aspects of the system may have been emergent vs intentional1.

- What unintended consequences could arise from changing different aspects of the system.

Comparable problem space

“To the person with the hammer, every problem looks like a nail”.

Searching for inspiration in analogous experiences and industries can open us up to new possibilities in healthcare. For example:

- What can we learn from how comparable problems have been addressed in other therapeutic areas?

- What might aspects of our problem look like if viewed through the lens of an entirely different industry or service2?

Research heuristics

Digital Health R&D projects come with their fair share of complexity, so we rely on both practices and principles to help us navigate the search space and generate insight. Some of the core, guiding principles we apply are:

- Resist the desire for certainty. When analysing qualitative research findings, we’re trying to infer insights from the conversations, rather than take everything users say literally. We want to view these conversations as a rich contextual tapestry that can complement and shape other research activities, rather than as explicit proof of users’ needs.

- Treat variance as the norm. One of the temptations with technology-based solutions is to sideline variance as error and scale the mean. When we’re dealing with measurable physiology and emotional states, however, variance is often the norm3 (both within and across individuals), so rather than sideline this variance, we need to seek the structure hidden in the randomness.

- Embrace the singular otherness of people. Speaking with any stakeholder (be they patients, HCPs, caregivers, key opinion leaders or internal client SMEs) is our opportunity to understand the riddle of their lives. And this requires unprejudiced objectivity4: the intention is to participate in an individual’s perspective, not to simply listen out for that which reinforces our own position or beliefs, or that would allow us to infer overly simplistic patterns across a group.

- Seek the wisdom of crowds. In healthcare we have the privilege of listening to leading medical experts discuss the problems the industry is facing. This can have a heavy weighting on product decisions, as it should. But it’s important to question the experts5. Speaking to those working on the front-lines and in the field who are not necessarily considered to be experts can be equally insightful. We want to play with the tension between experiences and perspectives across different healthcare practitioners, rather than simply defaulting to prestige.

- Beware ‘Indra’s Net’. As Iain McGilchrist said, “we tend to focus on certain things that stand forward to us immediately in the world and tend to neglect the background out of which they emerge and from which they are never separate.” When carrying out research, we can often be so involved in the details that we do not notice what is important about our observations as a whole. Taking the time to step back from a problem, condition or treatment to consider the connections, dynamics and relationships that are less clear or categorical can open us up to other points of view.

Outputs

Outputs vary depending on the context of the research, but if the research is directly related to a potential SaMD product then it will always contain the core HFE research requirements, including reports on:

- User needs. Who our users are and what really matters to them.

- Ecosystem of use. Understanding the context in which the condition takes place, is diagnosed and/or is treated.

- Solution landscape. Where the most compelling opportunities for digital lie; the different directions that the immediate solution could take; and our hypotheses on the potential benefits of these directions.

-

Although written about the creation of design systems, rather than systems in general, Jordan Moore does an excellent job of explaining the difference between emergent and intentional systems.

↩ -

One example of looking at a problem through the lens of an entirely different industry/service can be found in the story shared by Tal Golesworthy. From his talk on TED.com, “Tal Golesworthy is a boiler engineer—he knows piping and plumbing. When he needed surgery to repair a life-threatening problem with his aorta, he mixed his engineering skills with his doctors’ medical knowledge to design a better repair job.”

↩ -

Dr Lisa Feldman Barrett has shown that physiological states do not follow an established pattern of universality or standardisation, as previously believed, bur rather, these physiological states—such as emotions—are expressed differently both within and across individuals, depending on the context.

↩ -

The attitude we strive to cultivate when speaking with users has been encapsulated by the words of Carl Jung. In a lecture delivered to a group of clergy in Switzerland, a considerable number of years ago, Carl Jung said, “Feeling comes only through unprejudiced objectivity. This sounds almost like a scientific precept. And it could be confused with a purely intellectual abstract attitude of mind. But what I mean is something quite different. It is a human quality: A kind of deep respect for the facts—for the man who suffers from them and for the riddle of such a man’s life.”

↩ -

This has been inspired by the words of Avi Loeb. Avi is vocal about the need for the scientific community to rekindle a love of learning and curiosity. While there are many great articles and podcasts that we could send you to, here is an article from Harvard University that surmises what Avi is advocating for. In summary: “Research can be a self-fulfilling prophecy. By forecasting what we expect to find and using new data to justify prejudice, we will avoid creating new realities. Innovation demands risk-taking, sometimes contrary to our best academic instincts of enhancing our image within our community of scholars. Learning means giving a higher priority to the world around you than to yourself.”

↩